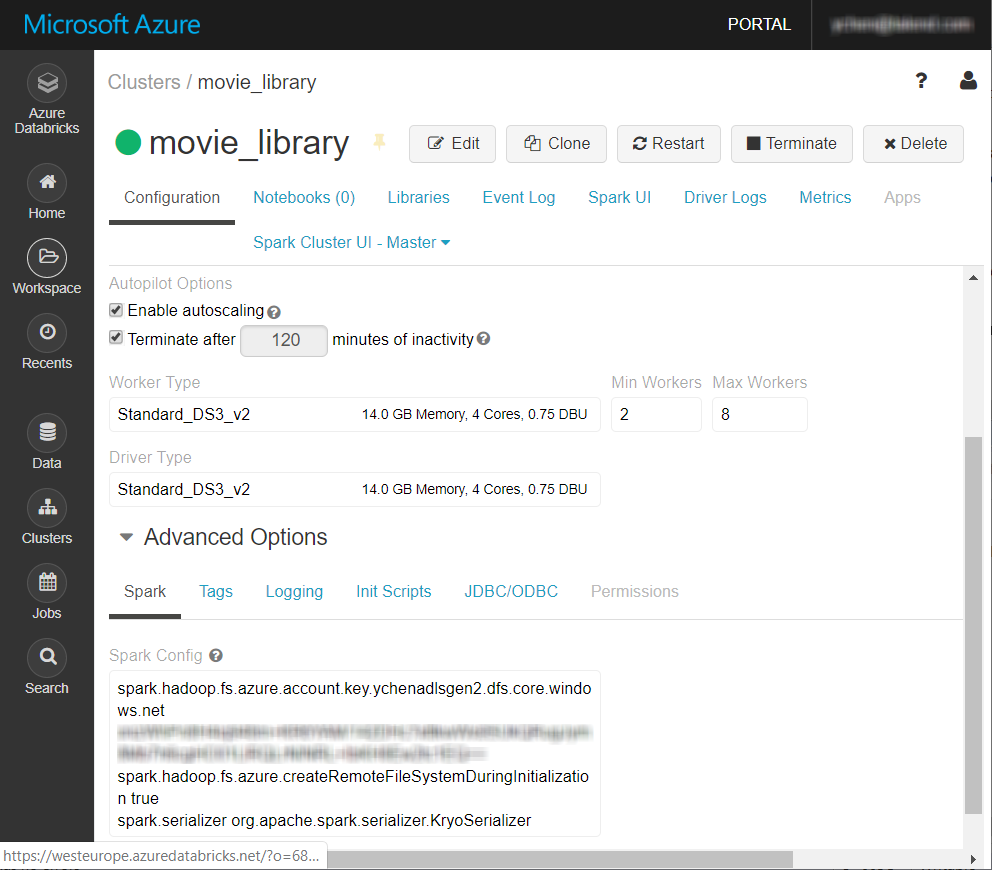

Adding Azure specific properties to access the Azure storage system from Databricks

Add the Azure specific properties to the Spark configuration of your Databricks cluster so that your cluster can access Azure Storage.

You need to do this only when you want your Talend Jobs for Apache Spark to use Azure Blob Storage or Azure Data Lake Storage with Databricks.

Before you begin

-

Ensure that your Spark cluster in Databricks has been properly created and is running and its version is supported by the Studio. If you use Azure Data Lake Storage Gen 2, only Databricks 5.4 is supported.

For further information, see Create Databricks workspace from Azure documentation.

- You have an Azure account.

- The Azure Blob Storage or Azure Data Lake Storage service to be used has been properly created and you have the appropriate permissions to access it. For further information about Azure Storage, see Azure Storage tutorials from Azure documentation.