Uploading files to DBFS (Databricks File System)

Uploading a file to DBFS allows the big data Jobs to read and process it. DBFS is the Big Data file system to be used in this example.

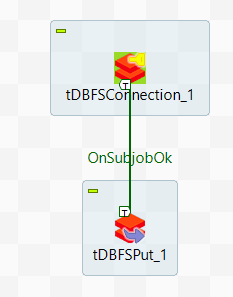

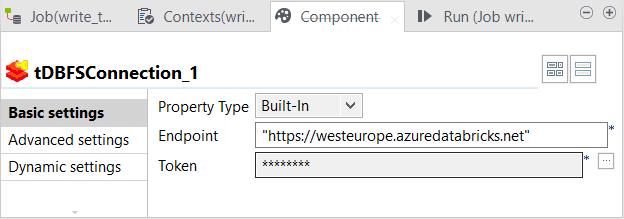

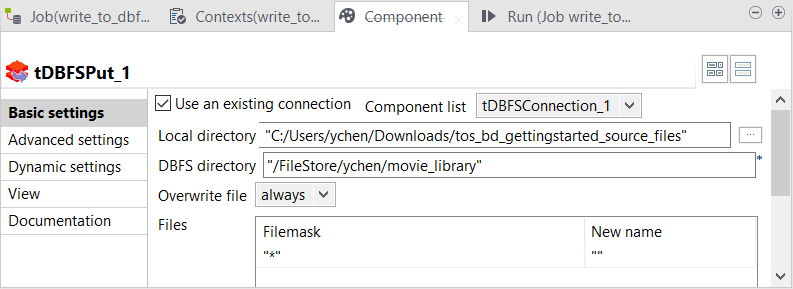

In this procedure, you will create a Job that writes data in your DBFS system. For the files needed for the use case, download tdf_gettingstarted_source_files.zip from the Downloads tab in the left panel of this page.

Before you begin

-

You have launched Talend Studio and opened the Integration perspective.

Procedure

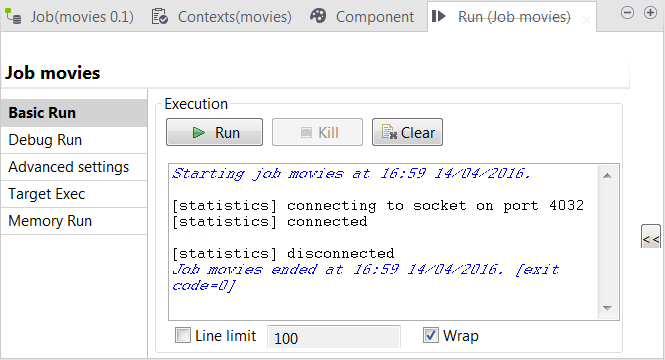

Results

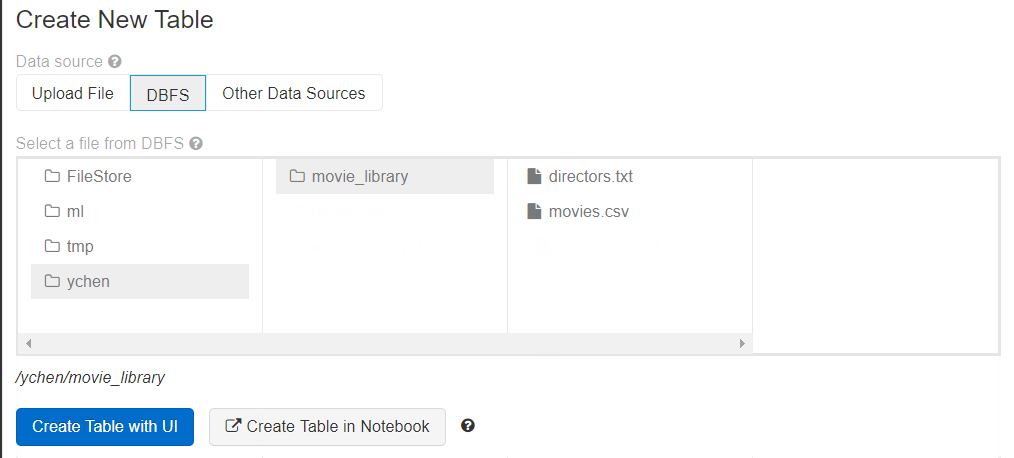

When the Job is done, the files you uploaded can be found in DBFS in the directory you have specified.

Did this page help you?

If you find any issues with this page or its content – a typo, a missing step, or a technical error – let us know how we can improve!